When Creativity Became a Science

World War II, the nuclear arms race, humanistic psychology, and the origins of modern creativity research

This is a fascinating story about how creativity research started in the 1950s and 1960s. Before 1950, psychologists didn’t study creativity. And then, creativity research went from nonexistent to everywhere. It impacted government, technology, culture, and the military. It’s a story of economics, great-power rivalry, technological invention, cultural transformation. Even LSD makes an appearance!

Why didn’t psychologists study creativity before 1950? The first barrier was behaviorism. Since the 1920s, American psychology had been dominated by behaviorism—think of Pavlov’s salivating dog and Skinner’s experiments with pigeons. Behaviorists studied only behaviors they could see. Anything that happened inside the brain was off limits—they reasoned that because the mind couldn’t be directly observed, it couldn’t be studied scientifically. By the 1970s, this approach had been rejected by most psychologists, but 1950 was the heyday of behaviorism, and behaviorism didn’t have much to say about creativity. Even the famous behaviorist B. F. Skinner agreed that behaviorism implied that human behavior was largely predictable. According to his theory, people generally behaved in ways that you could predict from their environment and from their history. In behaviorist theory, you didn’t need a science of creativity.

The second barrier was Freudian psychoanalysis. To a Freudian, creativity was a subliminal activity masking unexpressed or instinctual wishes; the people who chose to become artists were just redirecting repressed and unfulfilled sexual desires. The arts were based on illusion and the creation of a fantasy world, and were thought to be similar to a psychiatric disorder called neurosis. In this approach, if you wanted to study creativity, you would simply study repression and neurosis; the psychodynamics of the superego, the ego, and the id. In psychoanalytic theory, you didn’t need a science of creativity.

The third barrier was intelligent testing. Most psychologists thought that exceptional creativity was primarily a byproduct of high intelligence. Soon after World War I, Lewis Terman of Stanford University adapted Frenchman Alfred Binet’s new intelligence test for the United States, and for decades after that, the study of talent and human potential was dominated by the study of intelligence. If you believe that creative people are creative primarily because they’re more intelligent, then creativity research is basically the same thing as IQ research. If creativity is simply intelligence, you don’t need a science of creativity.

Everything changed in 1950. The famous psychologist J. P. Guilford gave an influential lecture, titled simply “Creativity,” at the 1950 American Psychological Association annual conference. After Guilford’s very public stamp of approval, other psychologists stepped in. Research agencies started funding creativity research, and more psychologists got involved. Studies of creativity blossomed. During the 1950s, there were almost as many studies of creativity published in each year as there were for the entire 23 years prior to Guilford’s lecture.

Even the most influential psychologist can’t change an entire academic field overnight; you can put the message out there, but the audience has to be ready to receive it. And Guilford’s APA address was the right message at the right time. In the years after World War II, the United States was an economic powerhouse, a machine exporting its products around the world, generating jobs for everyone. But the booming economy of the 1950s was very different from the 21st century’s information technology boom. There were no start-ups, no venture capital, no NASDAQ. Instead, almost everyone worked for a large, stable corporation. The workplace was much more structured than today. IBM was legendary for requiring each employee to wear a white shirt and a navy-blue suit, every day. Businesses were organized into strict hierarchies—almost like the military—and everyone knew their place in the pecking order.

Like the military, these companies were extremely efficient. Top-down structure is great for increasing efficiency and reducing cost, generating the most widgets at the lowest price. But many thoughtful commentators were concerned. After all, the U.S. had just fought World War II to defend freedom, and its Cold War adversary—the Soviet Union—was criticized as a restricted and controlled society. It was disturbing that U.S. society was beginning to seem increasingly constrained and regimented. Americans were increasingly worried about this “age of conformity.” A 1956 book called The Organization Man by William H. Whyte became a national bestseller. Its theme—that the regimented economy was resulting in an America full of uncreative, identical conformists—was echoed in similar books through the early 1960s. In 1961, management theorists Tom Burns and G. M. Stalker published The Management of Innovation, an influential book that argued that rigid hierarchical organizations were rarely innovative. Before this book, it was taken for granted that there was only one kind of effective organization—a top-down hierarchy, with rigid levels from the CEO at the top to the line workers at the bottom. But Burns and Stalker argued that creativity came from a different kind of company: one with flat hierarchies, empowered workers with autonomy, and authority distributed throughout the organization, not just flowing down from the top. The research psychologists who studied creativity in the late 1950s and early 1960s were profoundly influenced by these nationwide concerns.

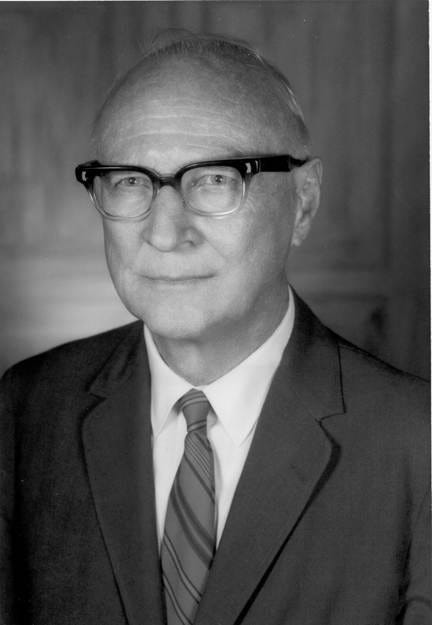

Guilford was already a famous psychologist when World War II started. He was tapped by the U.S. government to help the military carry out the most massive testing program in history. The military wanted efficiency, too. They needed to match each person’s skills with the specific demands of the job, and psychologists came up with tests for every cognitive ability and personality trait. Like Guilford, many of the other postwar creativity scholars got their start evaluating personality traits for the military. Donald MacKinnon and Morris Stein worked for the Office of Strategic Services (OSS), the predecessor to the CIA. They worked at the Assessment Center, a group that was charged with evaluating which people would best be suited for demanding roles overseas—irregular warfare, spies, counterespionage agents, and leaders of resistance groups. It was pretty obvious that you needed more than raw I.Q. to be a good spy, and the military needed more than a simple I.Q. test to find the best spies. Creativity, they reasoned, is going to help keep you alive behind enemy lines. Guilford worked for the U.S. Air Force, developing tests to identify the intellectual abilities essential to flying a plane.

After World War II, these military psychologists founded several research institutes to study creative individuals. MacKinnon founded the Institute of Personality Assessment and Research (IPAR) at the University of California at Berkeley in 1949. Guilford founded the Aptitudes Research Project at the University of Southern California in the early 1950s. Stein founded the Center for the Study of Creativity and Mental Health at the University of Chicago in 1952.

In the 1950s, we can see how conceptions of creativity reflected the social backdrop of that time. Creativity was seen through the lens of tensions between powerful nations. It was thought to be essential to navigate international threats and to win the competition for scientific and economic dominance. Around that time, the government began to give research grants to psychologists studying creativity. They funded research to identify creative talent early in life, to educate for creativity, and to design more creative workplaces. The goal of this research was no less than to better understand freedom and its place in American society. As psychologist Morris Stein wrote at the time,

To be capable of creative insights, the individual requires freedom—freedom to explore, freedom to be himself, freedom to entertain ideas no matter how wild and to express that which is within him without fear of censure or concern about evaluation.

In 1950, the government created the National Science Foundation, and one of its first programs was the provision of fellowship funding to graduate students. To do this successfully, the NSF wanted to develop a test to identify the most promising future scientists. They knew from their own personal experience that IQ alone wasn’t enough to make a great scientist. Personality psychologist Calvin W. Taylor led that research effort from 1952 to 1954; when he stepped down in 1954, he drew on his NSF connections to get funding for a series of conferences at the University of Utah on the identification of creative scientific talent, and the first one was held in 1955.

The Utah Conferences brought together most of the psychologists studying creativity. The fifth conference in 1963 even attracted Harvard professor and legendary LSD guru Timothy Leary. In the photo, he’s in the middle of the front row.

Creativity research was a high-stakes game during the nuclear arms race. In 1954, humanistic psychologist Carl Rogers warned that without creativity, “The lights will go out. International annihilation will be the price we pay for a lack of creativity.”

American creativity researchers believed they were defending freedom and helping to save the world from nuclear annihilation. Many people in America and in Europe agreed with Stein that liberal democratic societies were those most conducive to creativity. At the first Utah Conference in 1955, Frank Barron of the Berkeley IPAR described the creative society; it had “freedom of expression and movement, lack of fear of dissent and contradiction, a willingness to break with custom, a spirit of play as well as of dedication to work.” By 1960, creativity scholars began to sound as if they were writing the playbook for the hippie era that came only a few years later. Creativity researcher Frank Barron claimed to have first introduced Harvard’s Timothy Leary to the psychoactive properties of the psilocybin mushroom. Creativity research flourished in the 1960s, alongside psychedelics, hippies, anti-authoritarianism, and lenient childrearing practices. Leary would go on to become a famous champion of psychedelic drugs, widely known for his saying “Turn on, tune in, drop out.”

People’s ideas about creativity are always influenced by and a reflection of their society and their historical time. We shouldn’t be surprised that postwar American psychologists emphasized a conception of creativity that fit with a liberal democratic vision of society, one that contrasted the United States with the Soviet Union during the darkest years of the Cold War. I haven’t read anything from that era reflecting on how these conceptions of creativity may have been socially and historically unique. People often fail to notice how their own conceptions and values are historically and culturally contingent. A society’s views of creativity largely mirror the broader dynamics and characteristics of that society and era itself.

This essay is excerpted from my book with Danah Henriksen, Explaining Creativity: The Science of Human Innovation, Chapter 2, published in 2024 by Oxford University Press.

Further reading:

Burns and Stalkers’ influential management book: The management of innovation. 1961. Tavistock Publications.

Guilford’s lecture at the 1950 APA conference: “Creativity.” American Psychologist, 5(9), 444–454.